Ilya Sutskever’s New Playbook for AGIWhat his rare interview with Dwarkesh Patel reveals about the future of AI, the end of scaling, Safe Superintelligence Inc., and why humans still win at learningTLDR for busy peopleHere is the quick version of the entire interview in under 60 seconds. ▫️ The age of scaling is ending. ▫️ Generalization is the real frontier. ▫️ AGI will start as a superintelligent learner, not an all-knowing oracle. ▫️ Safe Superintelligence Inc. is a single mission company. ▫️ Timelines are short. For founders, investors, and researchers, this interview marks a turning point. The story is shifting from giant models to smarter models. The next decade of AI will be shaped by breakthroughs in learning, alignment, and continual adaptation. Why this conversation mattersIlya Sutskever (OpenAI co-founder) rarely speaks publicly. When he does, the industry listens. He helped drive the deep learning revolution with AlexNet, sequence models, and GPT-3. Dwarkesh Patel is the interviewer who can actually extract new ideas from people like Sutskever. This discussion is the clearest window we have into what one of the defining minds of modern AI believes comes next. 1. The age of scaling is endingFor the last five years, the strongest force in AI has been simple. Sutskever calls this period the age of scaling. He now believes this era is reaching its limit. According to him:

His line that caught everyone’s attention: “It is back to the age of research again, just with big computers.” The next breakthroughs will not come from 10 trillion parameter models. They will come from new training methods, new algorithms, and new ways to make models learn efficiently. The United States is in a strong position for this shift. The country holds the largest concentration of AI talent, compute, and early stage capital. The next wave will be driven by American research labs that figure out what scaling alone cannot. 🤖 Quick breakIf you ever wish you had an extra teammate handling the small but endless tasks, Sintra’s AI Helpers are actually great. They manage your social posts, reply to emails, update your site, and basically work nonstop without needing you to supervise them. Worth checking out if you want to save hours every week 👀 (code VCCORNER gets you 70 % off) 2. The generalization gap is the cruxThis was the most important technical insight in the interview. Sutskever says today’s models are extremely capable but generalize dramatically worse than humans. You can see this everywhere:

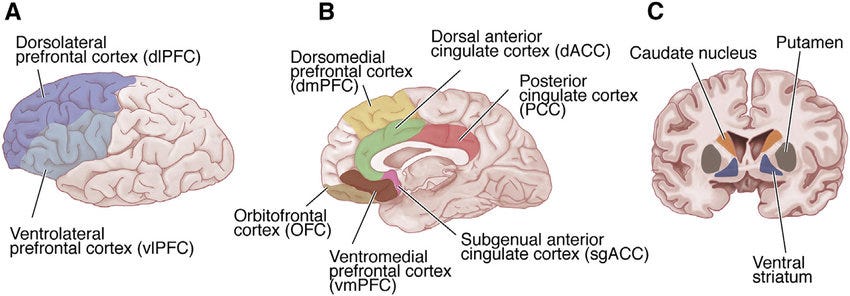

Humans do not behave like this. Two ideas from Sutskever explain why humans beat machines at generalization. Humans come with powerful priorsWe arrive preloaded with structure for vision, movement, physics, social interactions, and spatial reasoning. Evolution gave us a compressed package of extremely useful information. Humans have an internal value systemSutskever shares the story of a man who lost emotional processing after brain damage. Emotions act as dense, continuous reward signals that guide learning. Until we solve this, modern AI will continue to stumble outside its comfort zone. 3. What AGI actually looks likeSutskever gives one of the clearest descriptions of AGI I have ever heard. Instead of imagining an all-knowing entity, think of AGI as: A superintelligent fifteen year old that can learn any job extremely fast. Not omniscient. This is a fundamental shift from the original OpenAI definition of AGI. Dwarkesh captures it perfectly: A mind that can learn to do every job is already superintelligent, even if it does not know every job at deployment. Sutskever agrees. In this world:

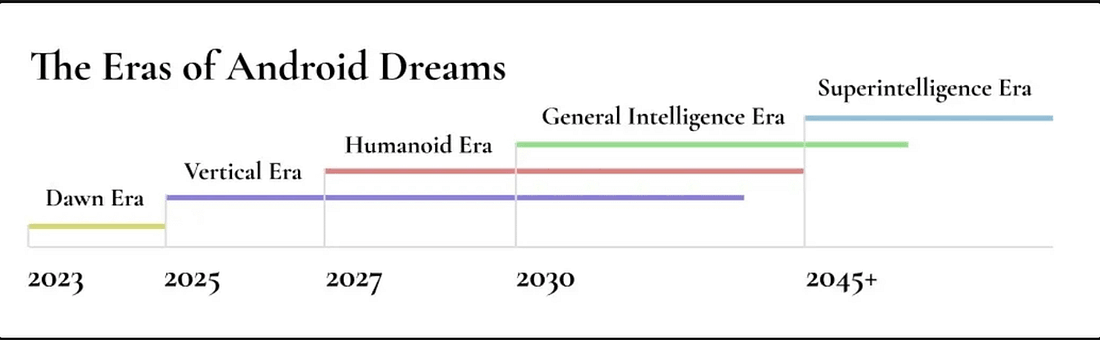

This is where the acceleration happens. A million learning agents in the real world, each mastering a different domain, and merging their insights continuously. This is the future most people are not yet imagining. 4. Inside Safe Superintelligence Inc.SSI is structured around a single goal. Build a safe superintelligent AI. No side products. A few things stand out: Their strategy is research heavySutskever’s belief is that we are entering an era where ideas beat scale. Their deployment philosophy is gradualEven if they aimed directly at superintelligence, the releases would be phased. Their technical approach is differentSutskever does not reveal details. The United States has become the epicenter of AGI development, and SSI represents a new wave of labs born from American founders, American capital, and American technical culture. 5. Alignment, values, and the question that mattersThe alignment part of the interview is quietly one of the most important. Sutskever believes that alignment is largely a generalization problem. He proposes one potential alignment objective: Make superintelligent systems care about sentient life. Not only humans. Dwarkesh points out the obvious tension. Sutskever agrees and treats it as one of several serious options, not the final answer. He also suggests limiting the raw power of the strongest systems. The most interesting idea is that multiple AIs will coexist, specialize, and balance each other. 6. The timeline that surprised everyoneWhen asked directly, Sutskever gives a simple answer. Five to twenty years. That is his estimate for AI that learns like a human and then scales beyond us. This is not science fiction. Even more interesting is what he describes as the path:

His view is optimistic but realistic. 7. How Ilya chooses the ideas that change the worldThe interview ends with a personal lesson from Sutskever. He says the way he picks research bets is through an aesthetic. He looks for:

He avoids ideas that feel forced or patched together. This gives him conviction when experiments fail. It is a mindset founders can use too. Final thoughtsThis interview feels like a turning point. The story of AI is moving away from bigger LLMs and towards smarter learning systems. For the United States, the stakes could not be higher. If Sutskever is right, the next decade will define the next century. |

NEWS AND CAREER INSIGHTS

Comments